The Question Everyone Is Quietly Asking

There’s a quiet shift happening in interviews right now—and most candidates can feel it. AI is no longer something people only use for writing emails or fixing code. It has entered the interview room. From tools like ChatGPT to Copilot-style assistants and even real-time AI support, candidates today have more help than ever before. And that’s exactly where the anxiety starts.

If everyone has access to AI, what does that mean for fairness? More importantly, are interviewers starting to notice?

The question many candidates don’t openly ask—but constantly think about—is this: Can interviewers actually detect AI-generated answers, or is this fear overblown? Some worry about being “caught.” Others are unsure where the ethical line is. And many simply want to know how to use AI without hurting their chances.

This matters more than it seems. A single interview can decide an offer, shape confidence, and influence long-term career direction.

In this article, we’ll separate myth from reality—breaking down what interviewers can actually detect, what they can’t, and how to approach AI in a way that works for you, not against you.

What “AI Answers” Actually Mean in 2026

When people talk about “AI answers” in interviews, they often imagine a single thing: a candidate secretly reading responses generated by a tool. In reality, AI usage in 2026 is much more nuanced—and not all of it carries the same level of risk or detectability.

Different Types of AI Usage

There are generally three ways candidates use AI in the interview process:

Pre-written answers: Preparing responses in advance using AI tools, often based on common behavioral or technical questions

Real-time AI assistance: Getting live suggestions or structured answers during the interview itself

AI-assisted practice and coaching: Using AI to simulate questions, refine answers, and improve delivery before the actual interview

Each of these plays a very different role. Practicing with AI beforehand is widely accepted and nearly impossible to detect. Pre-written answers can sometimes sound rehearsed if overused. Real-time assistance, on the other hand, introduces timing and delivery challenges that may raise suspicion if not handled well.

Why AI Answers Feel More Human Now

AI-generated responses have improved significantly. They are no longer overly generic or robotic. Modern systems can adapt tone, incorporate context, and even align with a candidate’s background when given the right input.

The Key Insight

Detection today is less about identifying AI—and more about noticing behavior. How you respond, adapt, and communicate matters far more than whether AI was involved at all.

Can Interviewers Actually Detect AI? The Short Answer

The honest answer is: sometimes—but not reliably. Despite all the discussions around AI in hiring, there is still no consistent or accurate way for interviewers to definitively tell whether a candidate is using AI-generated answers in a live setting.

First, there is no universal “AI detector” for interviews. Unlike written content, where some tools attempt (often unsuccessfully) to flag AI-generated text, live conversations are far more complex. Tone, pacing, context, and human variability make it extremely difficult to isolate AI usage with certainty.

Second, most interviewers rely on human judgment, not technology. They are trained to evaluate communication skills, problem-solving ability, and authenticity—not to run forensic analysis on your answers.

Signal vs Noise

This leads to an important distinction: strong candidates tend to sound natural and confident regardless of whether they use AI tools. Meanwhile, weaker candidates often sound overly scripted or inconsistent—even without using AI at all. In other words, what interviewers notice is not AI, but how convincing and coherent you are.

What Interviewers Think vs What’s Real

Many interviewers do suspect that candidates might be using AI, especially as these tools become more common. However, suspicion is not the same as detection. In most cases, they cannot prove it, and decisions are rarely based on that assumption alone.

Tools like Sensei AI can assist by generating real-time answers based on your resume and role, helping responses feel more grounded and less generic, which reduces the risk of sounding artificial.

Try Sensei AI for Free

Real Signals That Make Interviewers Suspicious

If interviewers can’t reliably detect AI itself, what do they actually notice? The answer lies in behavioral signals. Most suspicions don’t come from technology—they come from small inconsistencies in how candidates speak, respond, and adapt under pressure.

Common Red Flags Interviewers Pay Attention To

Overly perfect, structured answers with no hesitation

When every response sounds polished to the point of being unnatural, it can raise doubts. Real conversations usually include pauses, small corrections, and thinking moments.

Answers that don’t match resume details

If a candidate gives an answer that contradicts or goes beyond what’s on their resume, it creates a disconnect. Interviewers expect alignment between what you say and your actual experience.

Delayed responses (especially in live interviews)

Noticeable pauses before answering—especially after simple questions—can signal that something external is happening, even if it’s not necessarily AI.

Inconsistent follow-up answers

Strong candidates can expand on their answers when asked deeper questions. If details suddenly change or become vague, it can feel unreliable.

Lack of personal anecdotes or specifics

Generic answers without real examples often feel impersonal. Interviewers look for lived experience, not just well-phrased ideas.

These signals matter far more than any so-called “AI detection tool.” In fact, most hiring decisions are influenced by how authentic and consistent a candidate feels throughout the conversation.

Human Intuition Still Wins

At the end of the day, interviewers are not detecting AI—they are detecting gaps in authenticity. They notice when something feels off, even if they can’t explain why. And that intuition often carries more weight than any technology.

Detection Methods Companies Are Experimenting With

As AI becomes more common in interviews, some companies are starting to experiment with ways to control or limit its use. However, most of these methods focus on behavior and environment rather than directly detecting AI.

Common Detection and Control Methods

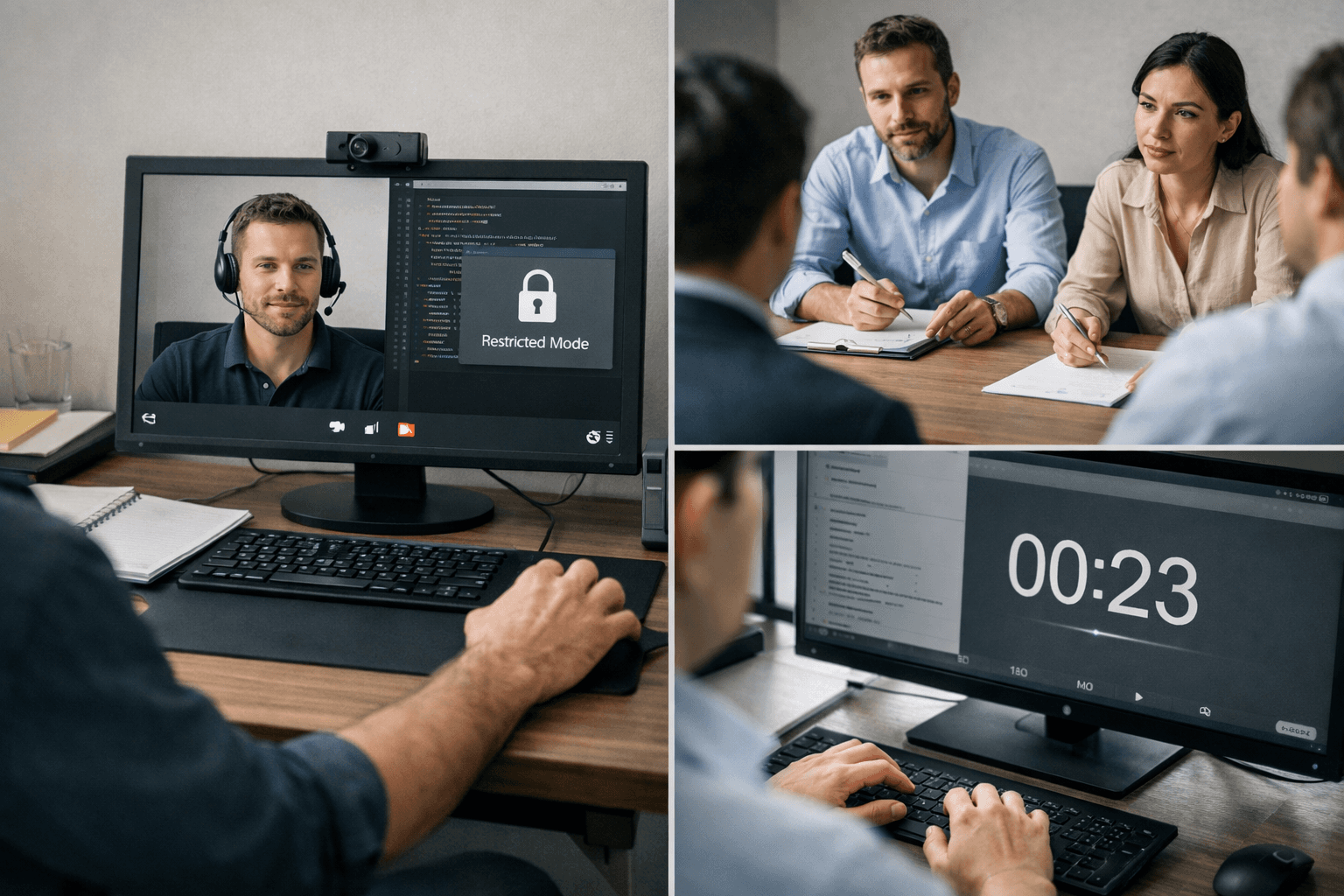

Proctored interviews (screen monitoring, camera tracking)

Some companies require candidates to keep their camera on, share their screen, or use monitoring software. The goal is to ensure that no external tools are visibly being used during the interview.

Locked-down environments (especially for coding interviews)

In technical roles, candidates may be required to work in restricted environments where external tabs, tools, or extensions are blocked. This is particularly common in structured assessments.

Behavioral questioning depth (follow-up pressure testing)

Interviewers often ask layered follow-up questions to test whether candidates truly understand their own answers. This approach is subtle but highly effective.

Time-based answering constraints

Some interviews intentionally limit response time to reduce the possibility of external assistance, forcing candidates to think and respond quickly.

Coding Interviews: A Special Case

Platforms like HackerRank and CoderPad are widely used for technical interviews. Many companies now require candidates to write code live while explaining their thought process. This makes it harder to rely entirely on external help, since understanding must be demonstrated in real time.

Why Most Detection Methods Are Imperfect

Despite these efforts, most detection strategies are far from foolproof. Many can be bypassed with minimal effort, and strict controls can sometimes penalize honest candidates. There is also a real risk of false positives—where natural pauses or nervous behavior are mistaken for suspicious activity.

Sensei AI’s real-time assistance is designed to be fast and hands-free, reducing unnatural delays that often trigger suspicion during interviews.

Practice with Sensei AI

The Ethics Question (And Why It’s Not Black and White)

The debate around AI in interviews often turns into a simple question: Is this cheating? But the reality is far more nuanced, and opinions vary depending on who you ask.

Two Opposing Views

“AI is cheating”

Some argue that using AI during an interview creates an unfair advantage. The concern is that candidates may rely on tools instead of their own knowledge, making it harder to assess true ability.

“AI is just a tool”

Others compare AI to resources like Google or Grammarly. From this perspective, AI is simply part of the modern workflow—something professionals already use in their daily jobs.

Both sides raise valid points, which is why most companies haven’t taken a strict, universal stance.

A More Practical Perspective

In reality, many employers are less concerned about how you arrive at an answer and more focused on what that answer reveals. They want to understand your thinking process, communication skills, and ability to handle real-world situations.

This shifts the conversation from “Are you using AI?” to “Are you using it well?”

The key difference lies in misuse vs smart augmentation. Relying entirely on AI without understanding your answers can backfire. Using AI to support and refine your thinking, however, can actually improve performance.

The Line Most Companies Care About

At the end of the day, most hiring decisions come down to two simple questions:

Can you clearly explain your answers when challenged?

Can you actually perform the job after you’re hired?

If the answer to both is yes, the method matters far less than people assume.

How to Use AI Without Getting Caught (or Sounding Fake)

Using AI in interviews is not inherently risky—using it poorly is. The goal is not to hide AI, but to make sure your responses still sound like you: natural, consistent, and grounded in real experience.

Practical Strategies That Actually Work

Personalize everything (resume-based answers)

Generic answers are the fastest way to sound artificial. Strong responses should reflect your past roles, projects, and decisions. The more specific you are, the more credible you sound.

Practice speaking naturally (not reading)

Even the best AI-generated answer can fail if it’s delivered like a script. Practice turning structured responses into conversational language. Small pauses and imperfections actually make you sound more human.

Use AI as a guide, not a script

Think of AI as a framework builder. It can help you organize your thoughts, but you still need to adapt the wording in real time.

Prepare for follow-up questions deeply

Interviewers rarely stop at your first answer. If you can’t expand, clarify, or defend your response, it creates doubt. Depth matters more than polish.

Think of AI as a Copilot, Not a Replacement

AI can support you, but it cannot replace you. You are still the one being evaluated—your judgment, your communication, your ability to think under pressure. The best candidates use AI to enhance their thinking, not substitute it.

Bad AI Use vs Smart AI Use

Approach | What Happens | Interviewer Reaction |

|---|---|---|

Copy-paste answers | Sounds robotic | Suspicion |

Personalized AI support | Sounds natural | Confidence |

No preparation | Inconsistent answers | Doubt |

Sensei AI listens to interview questions and generates answers in real time based on your uploaded resume and role details, helping you stay aligned with your actual experience rather than sounding generic.

Try Sensei AI Now!

The Future: Will AI Detection Get Better?

As AI continues to evolve, a natural question follows: Will detection eventually catch up? The answer is complicated—and slightly counterintuitive.

Where Things Are Headed

On one side, AI detection tools are improving, but at a relatively slow pace. Most current solutions still struggle with accuracy, especially in live conversations where tone, timing, and context are constantly shifting. False positives remain a major concern, and there is no widely trusted system for real-time interview detection.

On the other side, AI generation is advancing much faster. Modern AI can already produce highly personalized, context-aware responses that closely match a candidate’s background and communication style. As these systems continue to improve, distinguishing between “AI-assisted” and “fully human” answers will become even harder.

This creates what many describe as an “arms race”—where detection tries to catch up, but generation keeps moving ahead.

Why Human Evaluation Still Dominates

Despite all technological progress, hiring decisions are still largely driven by human judgment. Interviewers are not evaluating transcripts—they are evaluating people. Subtle cues like confidence, clarity, and adaptability are difficult for any detection system to measure accurately.

What Will Matter More Than Detection

In the long run, success in interviews will depend less on whether AI is used and more on how candidates perform in key areas:

Communication: Can you express ideas clearly and adapt your message?

Problem-solving: Can you think through challenges in real time?

Authenticity: Do your answers feel genuine and consistent?

These are the qualities that no detection tool can replace—and the ones that ultimately decide who gets hired.

Final Takeaway: What Actually Wins You the Job

At the end of the day, interviewers are not hiring “AI answers”—they are hiring people. Tools and technology can support your preparation, but they cannot replace authenticity, clarity, and problem-solving skills.

Key Points to Remember

Detection is inconsistent: Companies may try different methods, but none can reliably detect AI in live interviews.

Behavior matters more than tools: Pauses, explanations, and alignment with your own experience signal competence far more than whether AI is involved.

Smart AI use can help—but only if used correctly: Using AI as a copilot, not a crutch, helps you communicate clearly and consistently.

For those who want extra preparation, tools like Sensei AI’s AI Playground can help you practice responses and think through interview scenarios in advance. This allows you to enter interviews more confident, grounded, and ready to show your true capabilities.

Try Sensei AI Today

FAQs

What is the 30% rule for AI?

The “30% rule” for AI is an informal guideline suggesting that AI can handle around 20–30% of many knowledge-based tasks—such as drafting, summarizing, or generating ideas—but still requires human input for accuracy, judgment, and personalization. In interviews, this means AI can help structure answers or provide direction, but relying on it for 100% of your response often leads to generic or less convincing communication.

Can interviewers detect final round AI?

In most cases, interviewers cannot reliably detect AI use—even in final rounds. However, final interviews tend to involve deeper questioning, more follow-ups, and higher expectations for consistency. This makes it easier for interviewers to notice gaps in understanding or authenticity. So while AI itself isn’t directly detectable, poor delivery or shallow answers can still raise concerns.

Have you considered how AI skills could impact your career in 2026?

AI skills are quickly becoming a competitive advantage across industries. Knowing how to use AI effectively—whether for problem-solving, communication, or productivity—can significantly improve your efficiency and decision-making. In 2026, candidates who can combine domain expertise with smart AI usage are more likely to stand out and adapt to evolving job requirements.

How many jobs will AI take by 2026?

There is no exact number, but most research suggests AI will reshape jobs rather than simply replace them. Reports from organizations like the World Economic Forum estimate that while some roles will decline, millions of new roles will also be created. By 2026, AI is expected to automate certain repetitive tasks, but human skills like creativity, communication, and critical thinking will remain essential.

Shin Yang

Shin Yang est un stratégiste de croissance chez Sensei AI, axé sur l'optimisation SEO, l'expansion du marché et le support client. Il utilise son expertise en marketing numérique pour améliorer la visibilité et l'engagement des utilisateurs, aidant les chercheurs d'emploi à tirer le meilleur parti de l'assistance en temps réel aux entretiens de Sensei AI. Son travail garantit que les candidats ont une expérience plus fluide lors de la navigation dans le processus de candidature.

En savoir plus

Série de tutoriels : Présentation de notre nouvelle extension Chrome Listener

Can Interviewers Detect AI Answers in 2026? The Reality

What Is a Good ATS Score for a Resume? (And How to Actually Improve It)

Fractional Leadership: The Smart Way to Scale Expertise Without Full-Time Costs

Skills-Based Hiring: Why Your Degree Matters Less Than Your Skills in 2026

STAR Method 2.0: Upgrade Your Interview Answers with Precision

CAR Framework: How to Turn Your Experience Into Powerful Interview Answers

Situational Interview Tips: How to Answer Behavioral Questions with Confidence and Clarity

AI Augmentation & Literacy: How to Work With AI (Not Against It) in Your Career

Reverse Interviewing: How to Ask Smart Questions That Actually Win You the Job

Sensei AI

hi@senseicopilot.com